Something important has shifted in how enterprise organisations think about AI. The early conversation was about models. Which one to use. How capable it was. Whether it could write SQL or summarise a contract. That conversation has been largely settled. The models are good. In many cases, they are exceptional.

The new conversation is about context. And it is more important than the model conversation ever was.

From rules to context

For most of the history of enterprise software, intelligence meant rules. A system was "smart" because someone had encoded a set of instructions: if this, then that. The value was in the ruleset — carefully constructed, constantly maintained, always a step behind the complexity of the real world it was trying to model.

The shift that large language models represent is not primarily about capability. It is about a fundamental change in how intelligence works. Instead of relying on predefined logic, modern AI systems reason from context. They do not need every situation anticipated in advance. They need the right information about the situation in front of them.

This distinction — rules versus context — changes what needs to be built to make AI actually work in an enterprise. The question is no longer "do we have the right model?" It is "do we have the right context?"

What context actually means

When we talk about context for enterprise AI, we mean something specific. Not just data. Not just documentation. The full organisational understanding — the rules, yes, but also the decision traces: the exceptions, overrides, precedents, and cross-system knowledge that currently live in people's heads, in Slack threads, in the institutional memory of long-tenured team members.

There is a useful distinction here. Rules tell an AI agent what should happen in general. Decision traces capture what actually happened in specific cases — how rules were applied, where exceptions were granted, who approved what, and why. The organisations getting real results from AI are not just those who have clean data. They are those who have found a way to make this organisational context explicit, structured, and queryable.

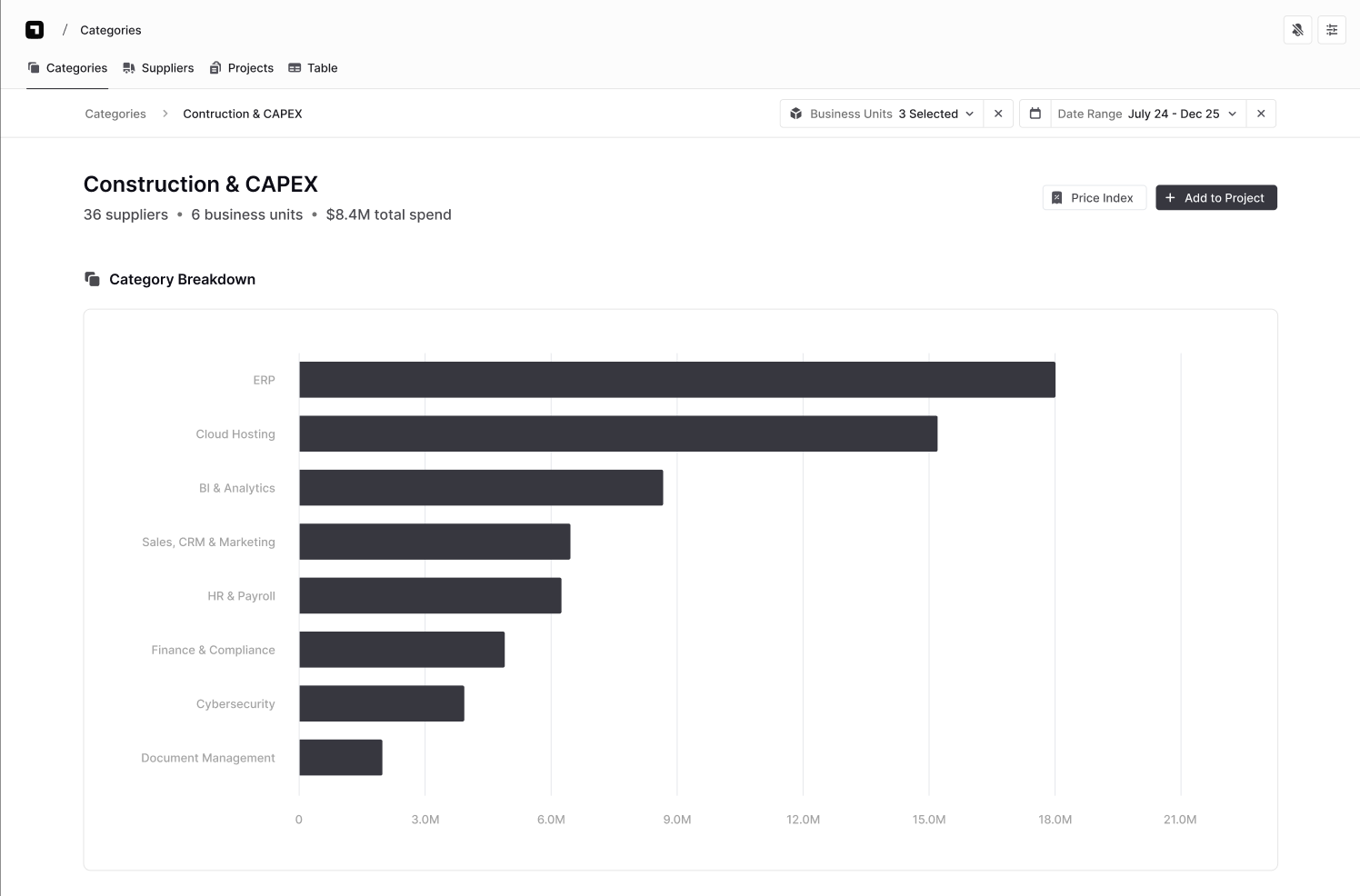

For a buyer preparing for a negotiation, context means: what have we spent with this supplier historically, how does that compare to the market, what commitments are we under, where is our leverage, what has worked before. For an AI agent operating autonomously in a procurement workflow, it means exactly the same things.

This is what makes spend data so important — and so interesting.

Why procurement data is the defining context challenge

Procurement sits on one of the richest contextual datasets in the enterprise. Every invoice, every supplier relationship, every category decision, every contract term — all of it is encoded in spend data. If you can structure and understand that data properly, you have a real-time picture of how the organisation creates (or destroys) value through its commercial relationships.

The challenge is that procurement data is also one of the most structurally complex datasets in the enterprise to work with.

A typical large organisation processes transactions across multiple ERP systems, procurement platforms, expense tools, and corporate card programmes — across different geographies, legal entities, and fiscal calendars. Each system captures data differently. Supplier names vary: the same strategic supplier might appear across systems as a dozen distinct records through different spellings, legal entity variations, and acquired subsidiaries. Category taxonomies overlap and diverge. Intercompany transactions sit alongside external spend and need to be understood separately.

None of this is a failure of any individual system. It is the natural result of how global organisations grow, acquire, and operate. But it means that the context layer for procurement data cannot be built by a general-purpose data tool. It requires domain-specific infrastructure.

And critically — most of the context that makes procurement data meaningful is never captured in any system at all. Why a particular category was structured the way it was. Which suppliers have informal agreements that don't appear in contracts. How spend patterns should be read given a specific business unit's seasonal dynamics. The category manager who knew all of this has moved on. The knowledge left with them.

This is what "missing context" actually means in procurement. Not that the data is dirty or siloed — though it often is — but that the reasoning connecting data to decisions was never treated as data in the first place.

The Deducta enterprise knowledge graph

Deducta's Agentic Framework is built around four components: Model, Context, Memory, and Tools. The model provides reasoning capability. The tools provide procurement-specific skills, while Context and Memory are what make the system reliable in practice.

Context means Deducta understands the entirety of an organisation's spend, suppliers, and actions in real-time. Not a static snapshot. A live, structured, organisationally-specific picture of what is happening commercially — across every ERP, every geography, every business unit.

Memory is where it gets really interesting. Deducta's persistent memory layer encodes institutional knowledge and best practices across the entire organisation — and crucially, it grows over time. Every decision, every correction, every exception a procurement team makes becomes part of the organisation's context. The tribal knowledge that used to live only in people's heads starts to live in a queryable system.

What this creates, over time, is a knowledge graph: a living record of how decisions were made, stitched across entities and time, so that precedent becomes searchable. Not just what happened, but why it was allowed to happen. Not just the spend figure, but the organisational reasoning behind it.

For procurement, this matters enormously. Category decisions are not made in isolation. They are made in the context of prior decisions, market conditions, supplier relationships, and organisational constraints that shift over time. A system that can only answer "what did we spend?" is useful. A system that can answer "what did we spend, how does that compare to precedent, and what were the conditions under which previous exceptions were granted?" is transformative.

A foundation, not a replacement

This data foundation does not replace the tools that sit above it. It makes those tools actually work properly.

The procurement function has never lacked for analytics software, sourcing platforms, or supplier management tools. What it has often lacked is the data quality and contextual richness to use those tools to their full potential. The same pattern is now repeating with AI: organisations are deploying agents on top of spend data that is not yet structured in a way that supports reliable reasoning, and finding that the outputs cannot be trusted.

Getting the foundation right is what changes that. It is the work that makes the rest of the stack — and the rest of the procurement function — perform as intended.

The goal is not to replace the buyer. It is to give every buyer the context they need to walk into a conversation — with a supplier, with leadership, with a category team — with a clear, data-backed story. That is where value is created.